Hands on with the Amazon DeepLens

The promise of machine learning on edge devices has taken a big step forward.Today I attended the annual AWS Summit in San Francisco. This year's event was significantly thinner than last year's in terms of content (last year's was 2 days, while this year's was one day). Quality, organization, and number of vendors were also a bit diminished. For some reason, Comcast Business had a booth. Confusing.

I wasn't getting into any of the talks I wanted, so I camped outside the DeepLens/Sagemaker talk for 45 minutes before door open to secure a spot. If you don't know what DeepLens is, it's basically a single board computer with a camera attached. Specs on the system are better than the raspberry pi type device I was expecting, though not amazing. As part of the Amazon ecosystem, it makes deploying a model to the device a relatively painless process, though the device limitations don't lend itself to actually training on the device.

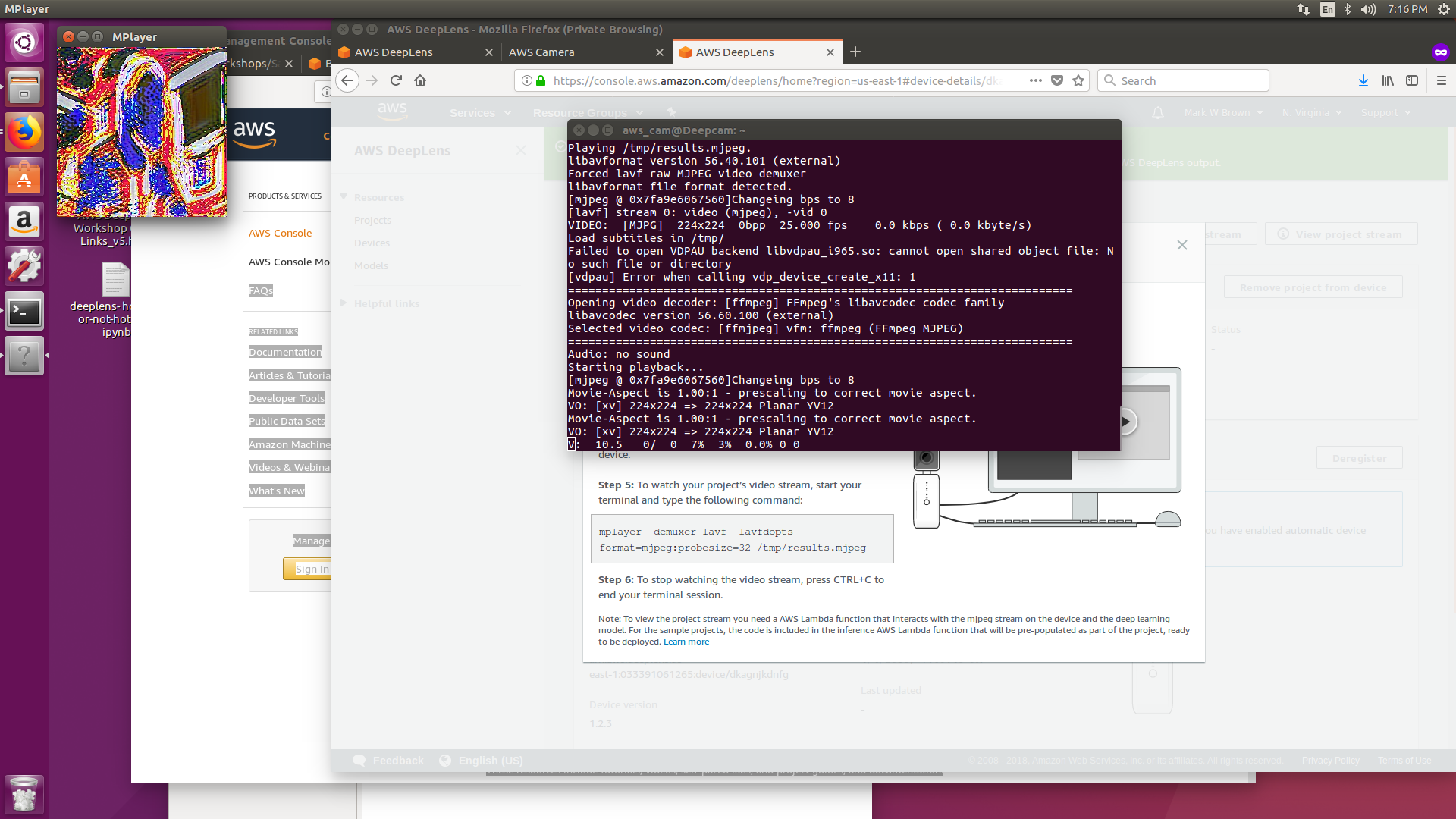

The hands-on demo walked through getting a jupyter notebook running on the device, spinning up an instance in the cloud and training a model (we didn't actually train a model due to time constraints, rather we faked having done so and returned the result as if we had run some computationally heavy processes that would take far longer than the hour or so we were in there). We then deployed a facial recognition model to the device which did a reasonable job detecting faces in the room. I had a bit of extra time so I deployed a Van Gogh painting model to the device. Here is the output:

For a dimly-lit room, it may not be apparent, but that is me at the computer as seen from the DeepLens. Overall, pretty cool. A few bits of the setup process were a little clunky, but I'd say they are 99% of the way there in making desktop machine learning development a thing.

https://github.com/fibbonnaci/DeepLens-workshops